TCP is the standard protocol for transmitting information on the Internet

The web is moving towards an encrypted ecosystem with about 80% of the client requests deploying encryption. A popular web page requires an average of about 20 TLS over TCP connections to several hosts. Hence, 20 TCP and TLS handshakes are required for initiating these connections. Especially for short web flows, this handshake delay represents significant overhead.

The speed at which TCP data flows is dependent on two factors: transmission delay (the network bandwidth) and propagation delay (the time that the data takes to travel from one end to the other). Transmission delay is dependent on network bandwidth, which has increased steadily and substantially over the life of the Internet. On the other hand, propagation delay is a function of router latencies, which have not improved to the same extent as network bandwidth. Reducing the number of round trips required in a TCP conversation has thus become a subject of keen interest for companies that provide web services.

What is TCP Fast Open?

This is where TFO or TCP Fast Open comes in handy. TCP Fast Open is an extension to speed up the opening of successive TCP connections between two endpoints. It works by using a TFO cookie (a TCP option), which is a cryptographic cookie stored on the client and set upon the initial connection with the server. In other words, TFO is an optional mechanism within TCP that lets endpoints that have established a full TCP connection in the past eliminate a round-trip of the handshake and send data right away. This speeds things up for endpoints that are going to keep talking to each other in the future and is especially beneficial on high-latency networks where time-to-first-byte is critical.

TCP Fast Open is defined in RFC 7413 which explains:

“TFO allows data to be carried in the SYN and SYN-ACK packets and consumed by the receiving end during the initial connection handshake, and saves up to one full round-trip time (RTT) compared to the standard TCP, which requires a three-way handshake (3WHS) to complete before data can be exchanged.”

According to the scientists who implemented TFO, it could result in speed improvements of between 4% and 41% in the page load times on popular web sites.

PKI: Are You Doing It Wrong?

The TCP three-way handshake

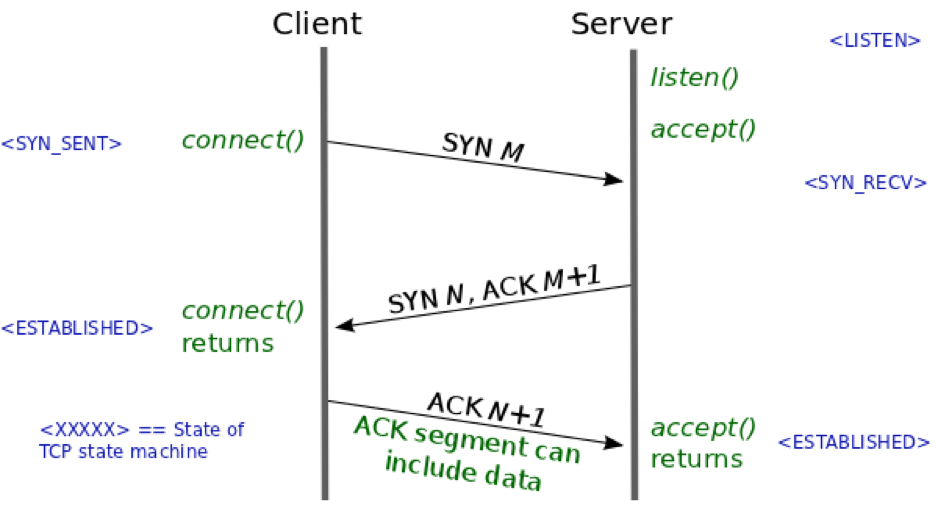

To understand the optimization performed by TFO, we need to note that each TCP conversation begins with a round trip in the form of the so-called three-way handshake. The three-way handshake is initiated when a client makes a connection request to a server. Figure 1 shows the details of the three-way handshake in diagrammatic form.

Figure 1: TCP three-way handshake between a client and a server. (source)

During the three-way handshake, the two TCP end-points exchange SYN (synchronize) segments containing options that govern the subsequent TCP conversation. The SYN segments also contain the initial sequence numbers (ISNs) that each end-point selects for the conversation (labeled M and N in Figure 1). From a security perspective, the three-way handshake allows the detection and blocking of duplicate SYN segments, so that only a single connection is created.

The problem with current TCP implementations is that data can be sent from the client to the server only in the third step of the three-way handshake (the ACK segment sent by the initiator). Thus, one full round trip time is lost before data is even exchanged between the peers. This lost RTT is a significant component of the latency of short web conversations.

How TCP Fast Open Works

Theoretically, the initial SYN segment could contain data sent by the initiator of the connection. RFC 793, the specification for TCP, permits data to be included in a SYN segment. However, TCP is prohibited from delivering that data to the application until the three-way handshake completes. This is a necessary security measure to prevent various kinds of malicious attacks. For example, if a malicious client sent a SYN segment containing data and a spoofed source address, and the server passed that segment to the server application before completion of the three-way handshake, then the segment would both cause resources to be consumed on the server and responses to be sent to the victim host whose address was spoofed.

The aim of TFO is to eliminate one round trip time from a TCP conversation by allowing data to be included as part of the SYN segment that initiates the connection. TFO is designed to do this in such a way that the security concerns described above are addressed.

"The TFO mechanism does not detect duplicate SYM segments"

On the other hand, the TFO mechanism does not detect duplicate SYN segments. In order to mitigate this problem, TFO employs security cookies, called TFO cookies. The TFO cookie is generated once by the TCP server and returned to the TCP client for later reuse. The cookie is constructed by encrypting the client IP address in a fashion that is reproducible but difficult for an attacker to guess. Request, generation, and exchange of the TFO cookie happens entirely transparently to the application layer.

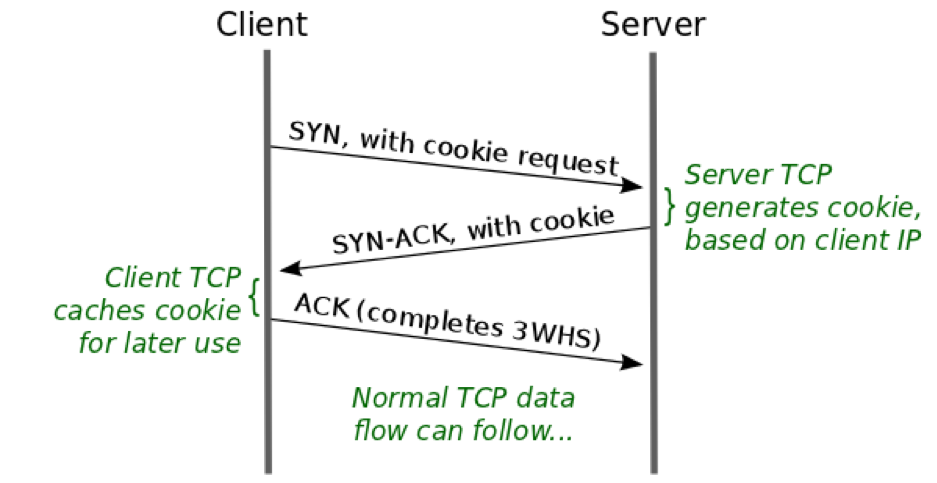

At the protocol layer, during the initial connection the client requests a TFO cookie by sending a SYN segment to the server that includes a special TCP option asking for a TFO cookie. The SYN segment is otherwise “normal”. There is no data in the segment and establishment of the connection still requires the normal three-way handshake. In response, the server generates a TFO cookie that is returned in the SYN-ACK segment that the server sends to the client. The client caches the TFO cookie for later use. The steps in the generation and caching of the TFO cookie are shown in Figure 2.

Figure 2: TFO Cookie generation. (source)

At this point, the client has a token that it can use to prove to the server that an earlier three-way handshake to the client's IP address completed successfully. For subsequent conversations with the server, the client can short circuit the three-way handshake as shown in Figure 3.

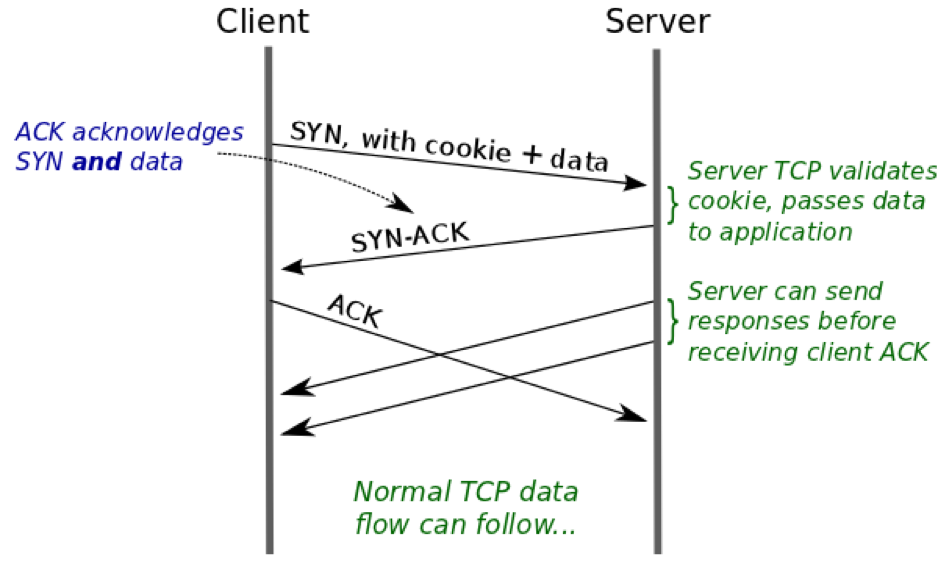

Figure 3: Subsequent use of TFO Cookie. (source)

The steps shown in Figure 3 can be described as follows:

- The client sends a SYN that contains both the TFO cookie (specified as a TCP option) and data from the client application.

- The server validates the TFO cookie. If the cookie proves to be valid, then the server can be confident that this SYN comes from the address it claims to come from. This means that the server can immediately pass the application data to the server application.

- From here on, the TCP conversation proceeds as normal: the server TCP sends a SYN-ACK segment to the client, which the client then acknowledges, thus completing the three-way handshake. The server can also send response data segments to the client before it receives the client's ACK.

In the above steps, if the server cannot validate the TFO cookie, then it discards the data and sends a segment to the client that acknowledges just the SYN and the TCP conversation falls back to the normal three-way handshake.

Comparing Figure 1 and Figure 3, we can see that a complete RTT has been saved in the conversation between the client and server. The client can repeat many TFO operations once it acquires a cookie until the cookie is expired by the server. Thus, TFO is useful for applications in which the same client reconnects to the same server multiple times and exchanges data.

TCP Fast Open vs. TLS 1.3 0-RTT

The TFO functionality is quite similar to the TLS 1.3 0-RTT or TLS early data. TLS 0-RTT is a method of lowering the time on a TLS connection. For first-time connections, TLS 1.3 requires just 1-RTT (a single round trip) of the protocol, making it quite a bit faster than its predecessors. For connections to a server where the client and the server possess a pre-shared key (PSK), the client may choose to encrypt early data under this key and send it along with the ClientHello. This allows the server to respond immediately with the requested data after its own ServerHello messages. This cuts an entire round trip off the communication: zero round trip time. In a mobile environment this may save a significant amount of time.

Of course, shaving off a round trip comes with certain trade-offs, since a 0-RTT request cannot prevent a replay attack. 0-RTT solutions require sending key material and encrypted data from the client without waiting for any feedback from the server. At a minimum, that means that adversaries can capture and replay the messages, which implies that the feature has to be used with great care. In addition to that, there are many potential pitfalls, such as compromising privacy by carrying identifiers in clear text in the Hello message, or risking future compromise if the initial encryption depends on a server public key.

TCP Fast Open privacy concerns

Although TCP Fast Open provides considerable latency improvements compared to normal TCP three-way handshake, its usage on the Internet raises alarming privacy concerns. TFO can be used to link TFO sessions and thus be exploited by an online tracker to collect profiles of the browsing behavior of users. Researchers from the University of Hamburg have identified that “TFO messages are unencrypted”, and hence they can be used for user tracking through passive network monitoring. What is more alarming is the fact that tracking via the TFO protocol is “independent of traditional tracking practices utilizing HTTP cookies or browser fingerprinting. Thus, existing countermeasures against conventional tracking do not protect against tracking via TFO.”

"Existing countermeasures do not protect against tracking via TFO"

To address the privacy problems, the researchers designed and implemented the TCP Fast Open Privacy (TCP FOP) protocol. Their analysis and results indicate that “TLS over TCP FOP fully protects against user tracking by network-based attackers. Furthermore, the protocol can also restrict third-party tracking and enables each application to control its privacy properties and to balance the trade-off between lower delays and privacy protection.”

(This post has been updated. It was originally published on September 5, 2019.)

Get a 30 Day Free Trial of TLS Protect Cloud, Automated Certificate Management.

Related Posts:

2024 Machine Identity Management Summit

Help us forge a new era of cybersecurity

Looking to vulcanize your security with an identity-first strategy? Register today and save up to $100 with exclusive deals. But hurry, this sale won't last!