I recently worked with a client on their public key infrastructure (PKI). After a few short discussions, it became evident that they were ill-prepared for a successful implementation. In particular, they had significant challenges related to managing SSL/TLS certificates which serve as machine identities in their diverse infrastructure. Like most organizations today, they had already started their digital transformation journey. But as the scale of that project increased, they were grappling with changes like moving from on-premises to the cloud and rearchitecting applications from the ground up. In addition, the organization had been plagued by operational pain and quality of talent, which resulted in an amalgamated mess of technology across various on-premises and cloud providers.

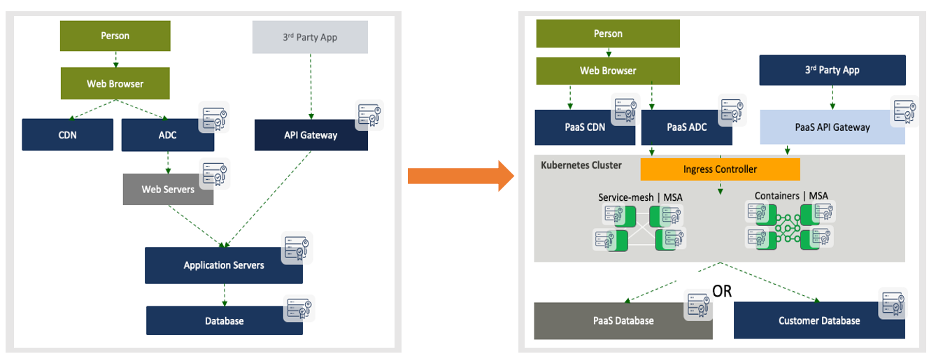

From an application perspective this is the journey this organization was following.

Furthermore, as we zeroed in on the PKI environment, we found that it was also showing signs of disarray. After several rounds of discussions, the root cause behind this became clear. The organization had built several layers of complex PKI infrastructure at all points of the infrastructure—both on-premises and in the cloud. There were no standardized controls or approaches in use of the PKIs. This oversight was proving to be costly in terms of developer and DevOps engineering time. In addition, security teams felt things were getting out of hand since many certificates were not being controlled or made visible to InfoSec and PKI teams. Even worse, security teams couldn’t inspect traffic since they didn’t have access to the private keys. Because of these shortcomings, information security was concerned that an attacker could go undetected by using encrypted tunnels.

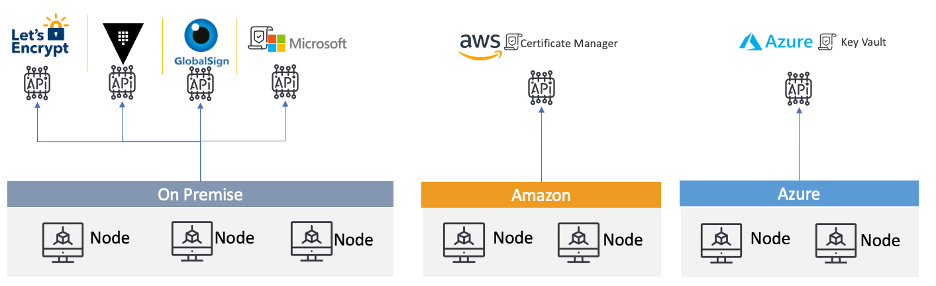

This is how their PKI was set up:

As you can see, they were using many different sources on-premises, ranging from Let’s Encrypt and GlobalSign to Amazon Certificate Manager and Azure Key Vault. They also had multiple instances of AWS and Azure.

My goal for this client was to streamline their PKI approach by removing unnecessary complexity and providing building blocks for developers to request certificates quickly via automation while also addressing security concerns.

Here is how I tackled the challenge.

Zero Trust with cert-manager, Istio and Kubernetes

Streamlining Certificate Processes to Address DevOps Security Challenges

Rather than reinvent the wheel, I turned to NIST for a blueprint that we could practically follow for TLS certificate management. Sparing you all the details, I read through several documents surrounding cryptography and landed a new guidance document: NIST 1800-16B: Securing Web Transactions: TLS Server Certificate Management.

After studying this detailed guidance, I extrapolated several clear, immediate recommendations around these themes:

- Simplify processes by offering a certificate service to developers

- Provide security teams control and visibility over certificates in use

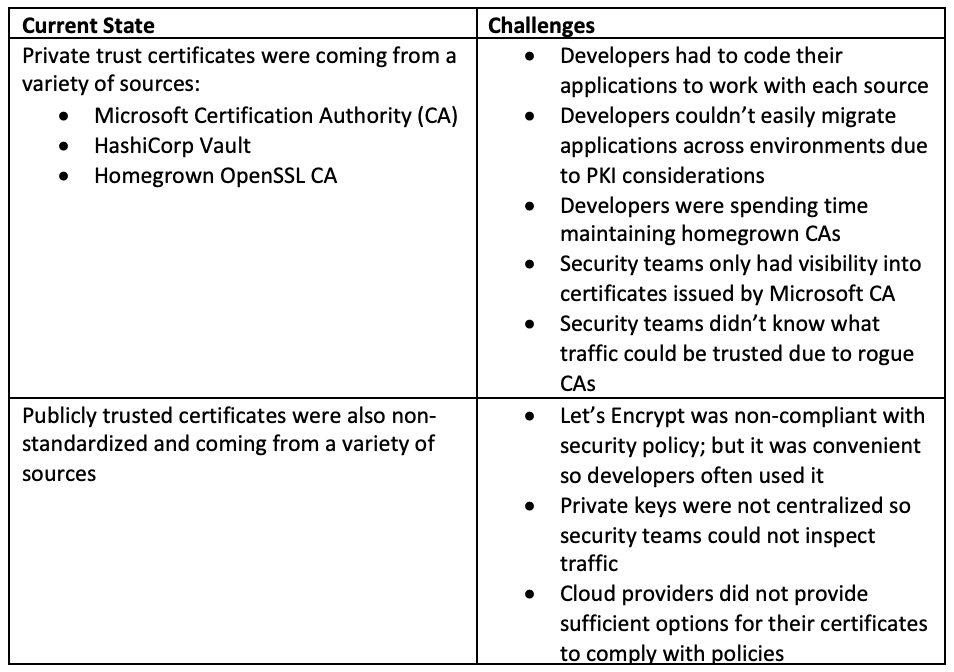

Current State

Challenges

Private trust certificates were coming from a variety of sources:

- Microsoft Certification Authority (CA)

- HashiCorp Vault

- Homegrown OpenSSL CA

- Developers had to code their applications to work with each source

- Developers couldn’t easily migrate applications across environments due to PKI considerations

- Developers were spending time maintaining homegrown CAs

- Security teams only had visibility into certificates issued by Microsoft CA

- Security teams didn’t know what traffic could be trusted due to rogue CAs

Publicly trusted certificates were also non-standardized and coming from a variety of sources

- Let’s Encrypt was non-compliant with security policy; but it was convenient so developers often used it

- Private keys were not centralized so security teams could not inspect traffic

- Cloud providers did not provide sufficient options for their certificates to comply with policies

My recommendations were to:

- Standardize CAs across on-premises and cloud providers

- Use Jetstack cert-manager to automate certificate life cycles for Kubernetes clusters

- Use Venafi as a Service (formerly called Venafi Cloud) to connect certificate authorities to DevOps workflows via native integrations, enforce policy (e.g. certificate attributes and sources) and provide visibility for audits and compliance

Ultimately, these choices helped to streamline certificate management workflows in multi-cloud Kubernetes environments and also provided a unified API that would allow interactions with different CAs.

How to Connect Kubernetes to Venafi as a Service

Technologies Used

- Jetstack cert-manager for Kubernetes (and OpenShift)

- Venafi as a Service

Step 1. Get a Venafi as a Service account: https://vaas.venafi.com

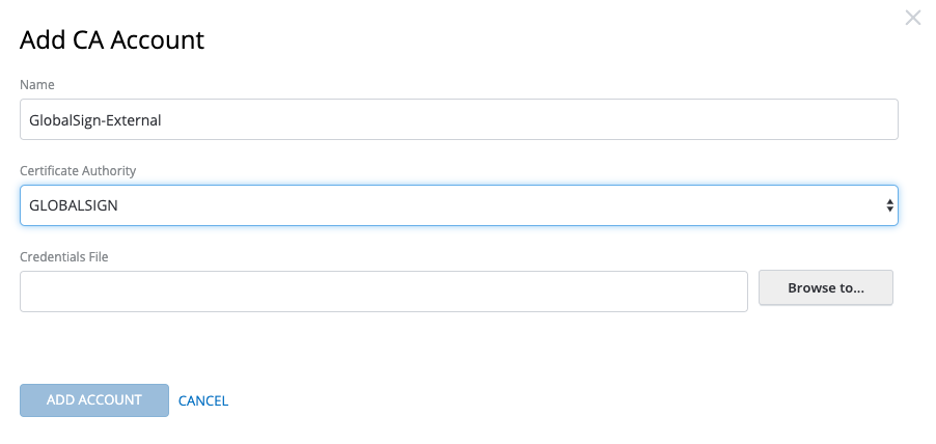

Step 2. Configure certification authority accounts

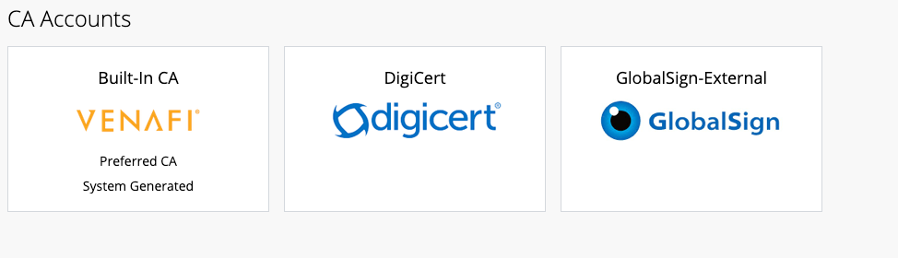

On the left-hand menu select “CA accounts” and set up your CA accounts. In my case, I have two public CA’s (GlobalSign and DigiCert) and one Venafi-provided Built-in CA which is going to replace the homegrown OpenSSL CA.

Select add new account for each of the providers. In my case, I needed GlobalSign, DigiCert and an internal CA.

The end result should look like this:

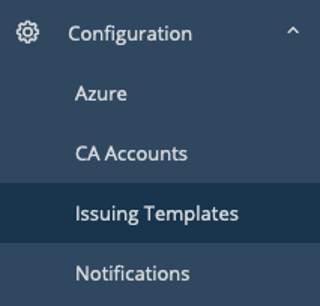

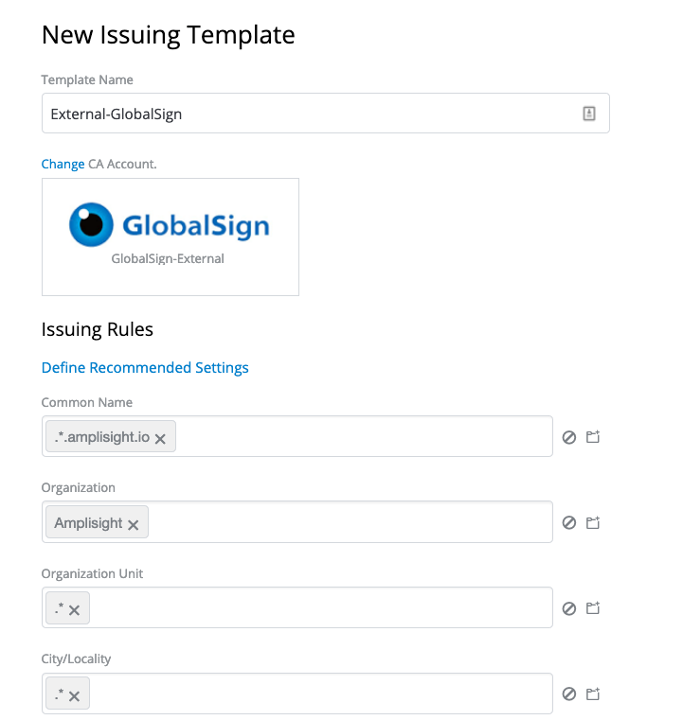

Step 3. Set up Issuing Templates

Issuing templates connect a certification authority and certificate attributes together—aka security policy. You’ll likely have multiple issuing templates since you will need publicly trusted certificates with longer validity periods and also private certificates for back-end infrastructure such as containers and service meshes.

Note: Make sure you use .*.yourdomain.com to match your domain if you want to make sure you validate domains before issuance (Recommended). Example below:

Step 4. Configure Projects and Zones

In this example we are going to create a Cloud Project and grant the team the ability to issue both public and private certificates.

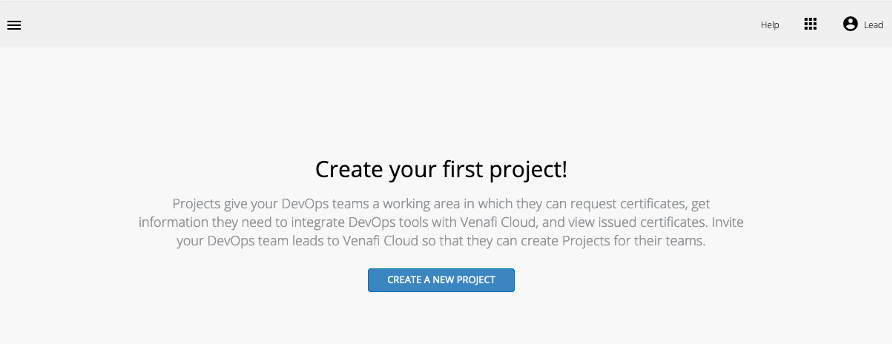

In order to issue certificates we first create a Project, which allows us to segment certificate issuance for an application.

Select “Create A New Project” and give the project a name, e.g. “Cloud.”

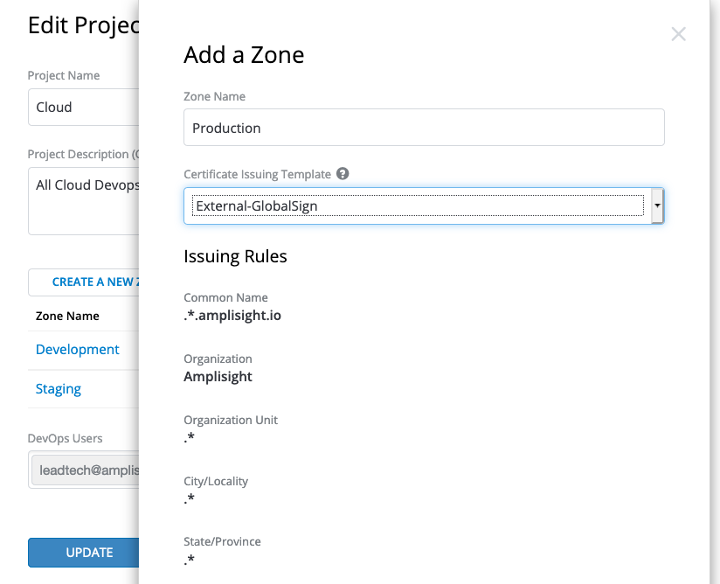

Then, add a Zone. A Zone represents an environment, e.g., development, staging, production.

Since this is the first time using Venafi as a Service, I’ll create zones and tie them to the issuing templates. This connects an application’s environment to the certificate source and attributes as defined in the issuing template (aka security policy).

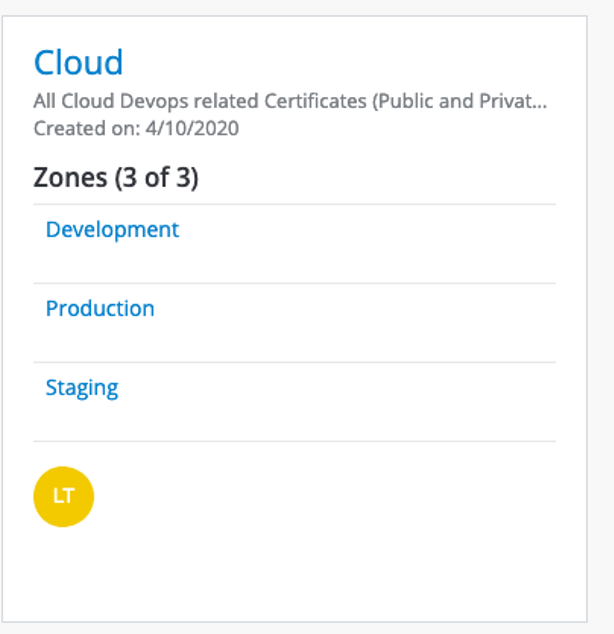

Step 5. Grab Zone IDs to use for issuing

Once you’ve finished the previous step, your project should be listed. Click on the project. In this case our project name is “Cloud” so I clicked on the Project named “Cloud.”

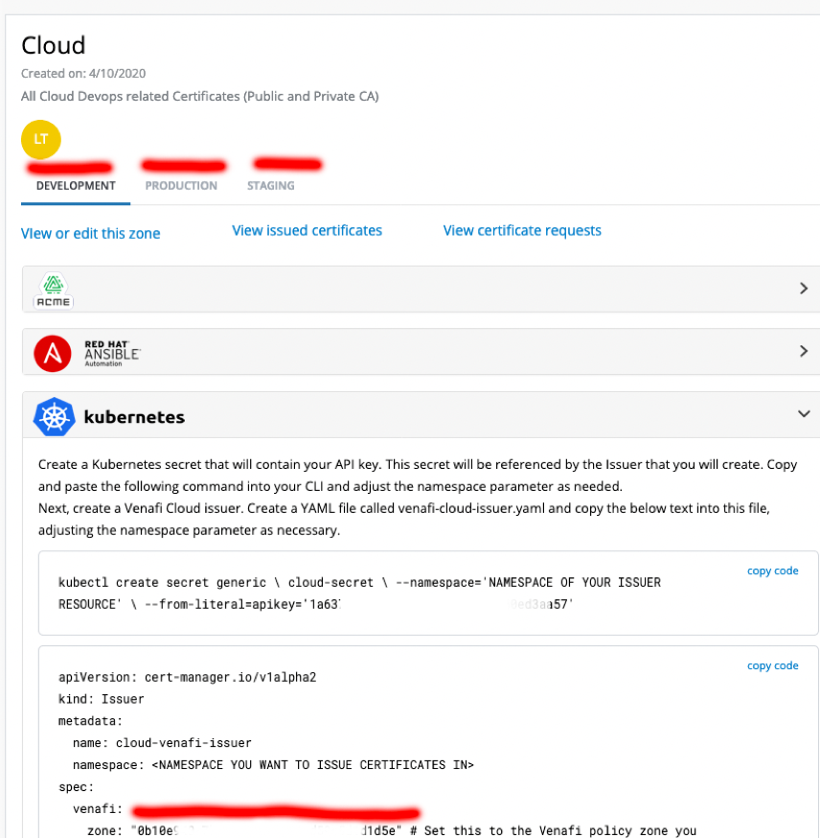

Once opened you will see each Zone that is tied to our issuing templates (this defines the CA and certificate attributes). Click on each tab—Development, Production, Staging—and copy the Zone ID’s. These will be used in our yaml files to create the issuers in cert-manager. An example is highlighted in the subsequent screenshot:

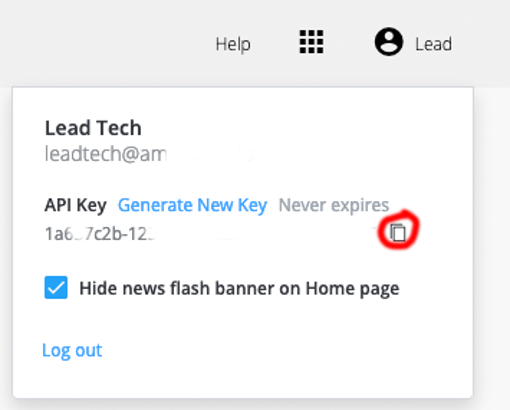

Step 6. Grab the API key and store it for use later

This is what we are going to need to make the certificate request to the Venafi as a Service API broker.

Now that we have the information, we need to get started issuing certificates, let’s install Jetstack cert-manager so we can start requesting certificates from the various CA providers to use in our Kubernetes environment

Step 7. Install Jetstack cert-manager

First let’s create a Kubernetes namespace specifically for cert-manager.

kubectl create namespace cert-manager

kubectl apply -f https://github.com/jetstack/cert-manager/releases/download/v0.14.2/cert-manager.yaml

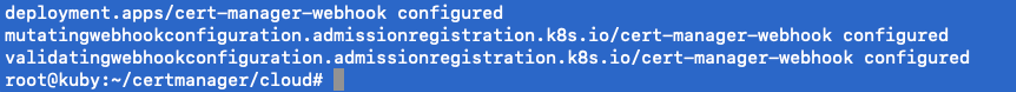

If all went well you should see something like this.

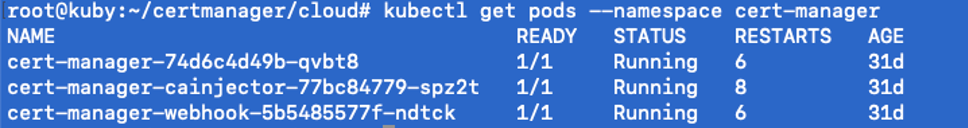

Run the following to check on status, running means we are ready to move on the next step:

kubectl get pods --namespace cert-manager

Step 8. Set up the API key as a secret within Kubernetes

This is the API that you grabbed in Step 6 above.

If you are using a separate namespace, just change the name from default.

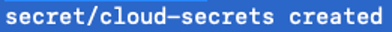

kubectl create secret generic cloud-secrets --namespace='default' --from-literal=apikey='1a637c2b-125f-0000-82ea-c7b60ed00000'

Should return:

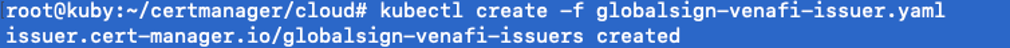

Step 9. Set up the issuers that you want to configure

In our case we have three issuers so we are going to need to create three issuers.

Please note the name and zone id in the file.

kubectl create -f venafi-cloud-issuer.yaml

kubectl create -f globalsign-cloud-issuer.yaml

kubectl create -f digicert-cloud-issuer.yaml

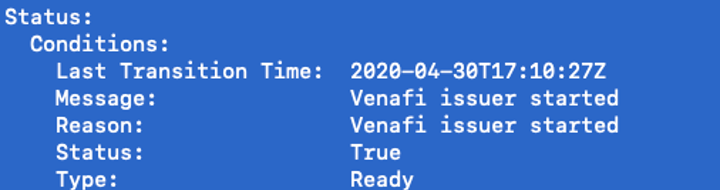

kubectl describe issuer globalsign-venafi-issuer --namespace=default

kubectl describe issuer globalsign-venafi-issuer --namespace=default

kubectl describe issuer cloud-venafi-issuer --namespace=default

Step 10. Try it out!

Now that we have installed cert-manager, we can start requesting certificates. Note also that cert-manager automatically attempts to renew certificates before they expire which is very handy.

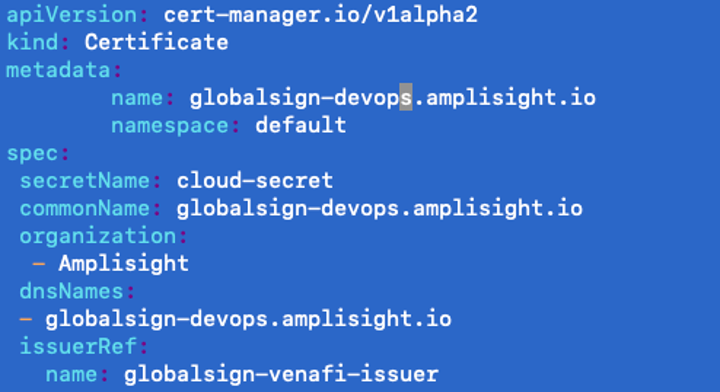

In my example, I’ll request a certificate from our internal CA and one from our external to be used. Example request format is below.

Running the request:

The below command will run the request to get a certificate.

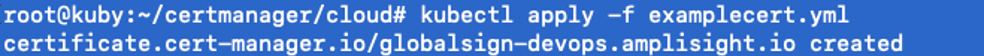

kubectl apply -f examplecert.yml

View your request here:

https://ui.venafi.cloud/certificates/inventory

Step 11. Troubleshooting

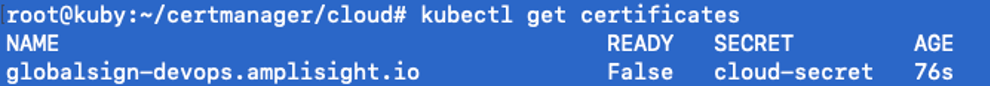

The first step to troubleshooting is to get a status on your existing certificates. So we will run our kubectl get certificates command. This will allow us to see what is currently in inventory and the status.

kubectl get certificates

Since the flag is false (expected to be true) we will dive a little deeper and get the appropriate status messages using :

kubectl describe certificates globalsign-devops.amplisight.io

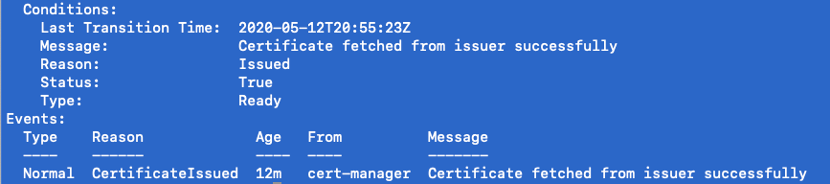

Once the command is run, you will see there is a listing for the certificate request in the message and the current status. Above you will see that each certificate request gets assigned a unique id which is in the format of <certificate name>-Unique ID.

In order to see more details on potential errors on issuance you must run the following command:

kubectl describe certificaterequest globalsign-devops.amplisight.io-23434489

In our case, we were just a little (or a lot!) impatient and needed to allow the issuer time to issue the certificate.

Take the Next Step

Want to go a step further? Check out this guide on setting up an nginx ingress controller and automatically applying a certificate: https://github.com/jetstack/cert-manager-nginx-plus-lab

Cover every cluster with ease and efficiency.

Related posts